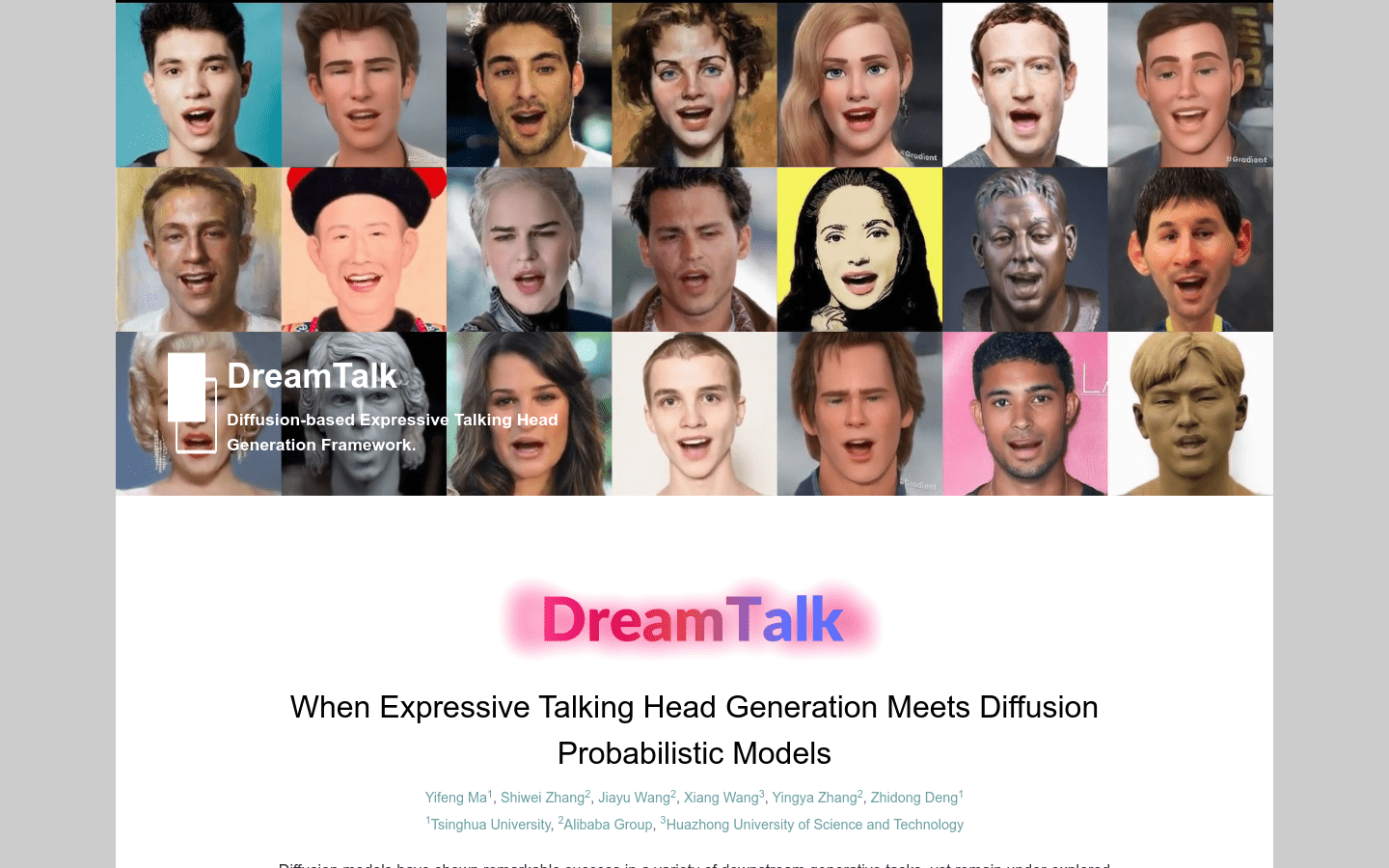

Dreamtalk

Dreamtalk:Diffusion model for expression action generation

Tags:AI image generationAI avatar generation AI image generation Denoising Network Diffusion Probabilistic Model Expression Action Generation Lip Movement Open Source Standard Picks Style Prediction Talking FacesIntroduction to DreamTalk: A Framework for Realistic Talking Face Generation

DreamTalk is an innovative framework designed to generate expressive talking faces using a diffusion probabilistic model. This cutting-edge technology consists of three core components:

- A denoising network: responsible for producing high-quality, noise-reduced facial animations.

- A style-aware lip expert: specialized in enhancing expression details and ensuring accurate lip movements.

- A style predictor: capable of predicting target expressions without the need for video or text references.

By leveraging the power of diffusion probabilistic models, DreamTalk achieves remarkable results:

- Synthesis of photorealistic talking faces with diverse languages and expressions.

- Reduction in reliance on expensive style reference materials.

- Creation of realistic facial actions that closely mimic human expression variations.

Target Audience

DreamTalk is designed for a wide range of users, including:

- Filmmakers and content creators: looking to create lifelike virtual characters and animations.

- Virtual anchor developers: aiming to bring more engaging digital presenters to life.

- Human-computer interaction researchers: seeking natural, expressive interfaces for AI systems.

Applications of DreamTalk

DreamTalk offers versatile applications across multiple domains:

- Language and Expression Customization: Generate talking faces with unique languages and expression styles tailored to specific needs.

- Film Production: Bring virtual characters to life by integrating realistic facial expressions and movements into animations.

- Human-Computer Interaction: Enhance user experience by implementing natural facial expressions and lip movements in AI-driven interfaces.

Key Features

DreamTalk’s standout features include:

- Realistic Face Synthesis: Utilizes a diffusion probabilistic model to generate photorealistic talking faces with remarkable detail and realism.

- High-Quality Audio-Driven Animations: Employs a denoising network to synthesize clean, high-quality facial actions synchronized with audio input.

- Enhanced Expressive Capabilities: The style-aware lip expert module ensures detailed expressions and precise lip movements for natural communication.

- Unsupervised Style Prediction: Leverages a diffusion probabilistic model to predict target expressions without requiring video or text inputs, making the system more versatile and user-friendly.

DreamTalk represents a significant leap forward in AI-driven face animation technology, offering unparalleled realism and flexibility for a variety of applications. Its innovative approach to style prediction and expression generation makes it an invaluable tool for professionals seeking cutting-edge solutions in film, virtual reality, and human-computer interaction fields.